Exploring VDB's in Lightwave 3D

This tutorial is about experimentations and diverse views on using the openvdb toolkit in Lightwave 3D.

The Idea

The exploration here is about using openvdb to create landscapes. I'ts common to use the nodal displacement on an object with procedural textures until to achieve something like a landscape. This method requires a mesh with subdivisions to work properly, which can be very expensive to the render engine to calculate, and getting longer render times in the end.

The approach I propose is to use the mesh displacement as a base to create an openvdb SDF. In doing so, the 3D artist can "balance" the number of subdivisions in the mesh with the resolution of the openvdb to obtain, in the end, a more organic mesh with an optimized subdivision level and less memory consuming. Therefore, faster render times.

We can save the VDB mesh as an openvdb SDF. This will free the render engine from have to freeze and calculate the SDF before rendering. In my tests, I have got faster renders using this method: when I use the tradicional displacement on a mesh, the render time is always higher.

The Lightwave artist can be tempted to use the openvdb noise node to do this work directly on the vdb SDF. This is a huge mistake. Lightwave will have to do more calculations and the entire system will get a lot slower: as a result, the workflow will be impossible to execute. Instead, if the 3D artist uses the nodal mesh displacement as the driver for the SDF displacement, Lightwave will handle the SDF smoothly.

In this example, I'll create a terrain using nodal displacement on a mesh that will be transformed in an openvdb SDF. In this way, we'll get a more organic terrain than if we only used the traditional method. Next I'll show you the lookdev process, which uses a procedural workflow using Lightwave's native procedural textures. This include the optimization of the render settings. Following up, we'll see how to bake the textures to use on our VDB mesh (yes! we will not convert it to a lightwave object because this will trigger a longer render time). Then, we will apply the textures, render the scene, analyze the results and think about possible workflows. Let's dive in.

The beginning: how to set up the displacement correctly.

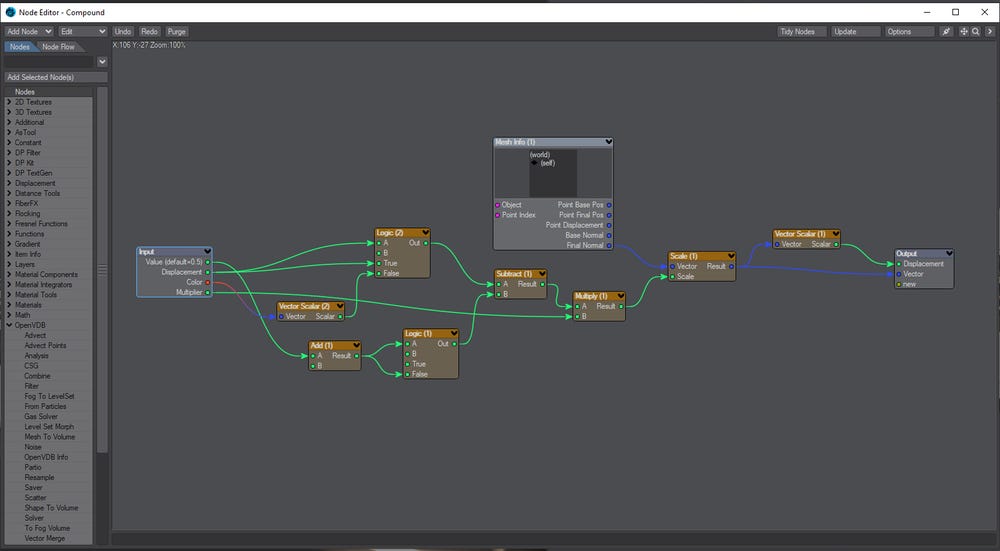

In my nodal network you can see a compound node called Displacement. Inside there's a nodal setup that it is not complex: it's only a node logic to use Zbrush displacements tweaked to a more general use. Let's understand it.

The subtract node is the main aspect: he subtracts 0.5 from the input. This is what we have to do when dealing with Zbrush displacements, because the zero point is actually mid-gray (0.5). In a common setup, we connect this result to the scalar socket of the vector scale node. In the vector input we connect the normals output and we have our displacement.

What I did was replace the normals input with a Mesh Info node because I was creating a compound node to be used in any other scene. Then, he provides the normals information of the mesh I'm using.

The logic node at the top verifies if the scalar input (displacement) is not zero. If not, he passes the value of the displacement output. If the value is zero, que passes the value of the color output. I did this to allow me to use a scalar node as input (like a procedural texture) or an image, for example. Note that I put a vector scalar node to convert the vector input from color to a scalar value. In this case, the vector scalar node was set up to pass the maximum value.

The logic node at the bottom verifies if the input (Value) is equal to zero. If true, he passes the value of 0.5; if not, he passes the value defined by the artist. The add node is only used as a placeholder to organize better the nodal graph: he is adding nothing. The multiply node is doing exactly this: multiplying the displacement value by a factor defined by the artist. The default value is one.

The last vector scalar node was used to output a scalar value. He outputs the maximum value. And this is my Displacement compound node that I saved it and I can use in any other scene I create.

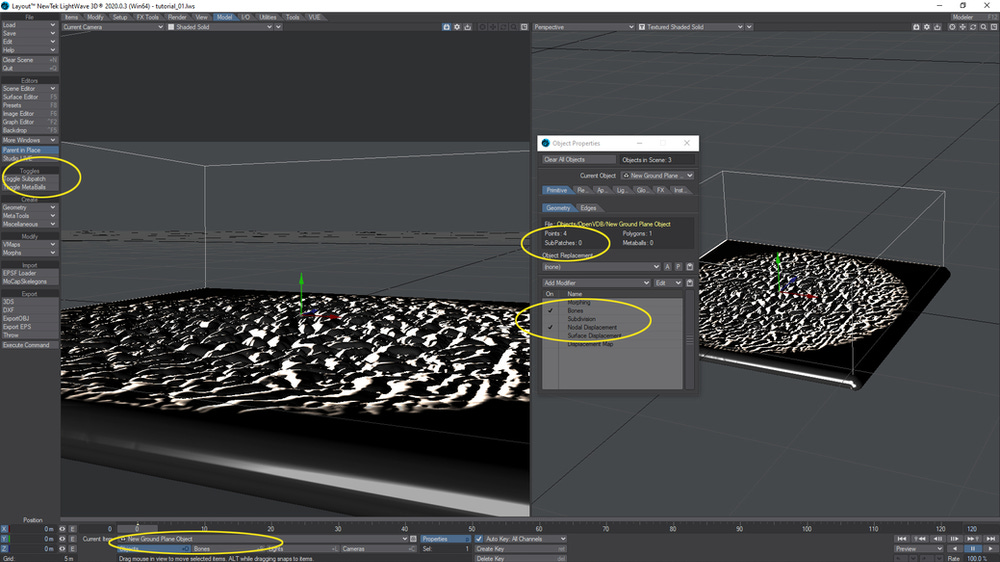

The scene set up.

For a landscape we need a plane. I created the plane with the default settings, 10 meters by 10 meters and with 100 by 100 subdivisions. I enabled the nodal displacement and did a temporary basic nodal setup.

Next, I created a null and named it volume. Using the scene editor, I set the plane to be hidden in the viewport and to the render engine.

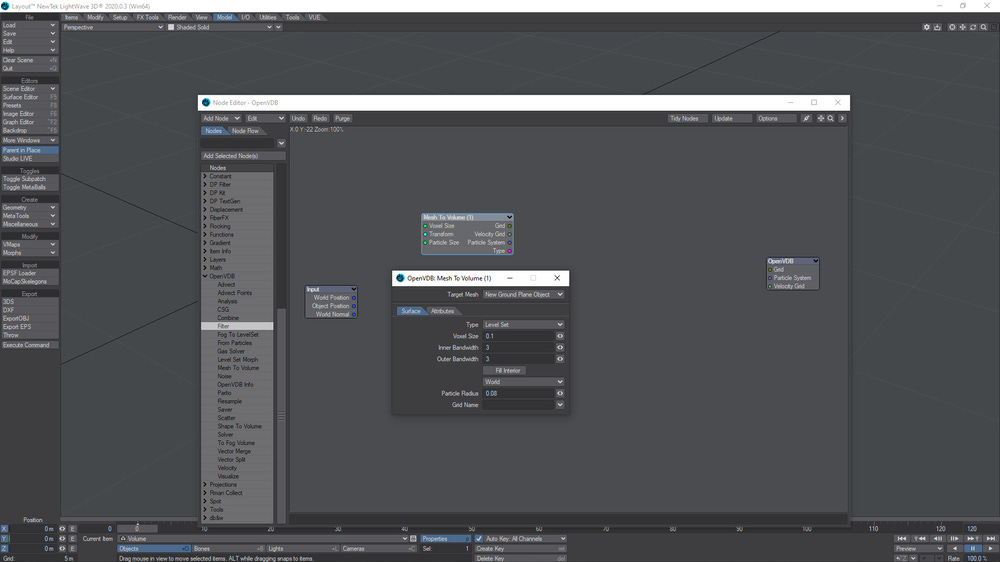

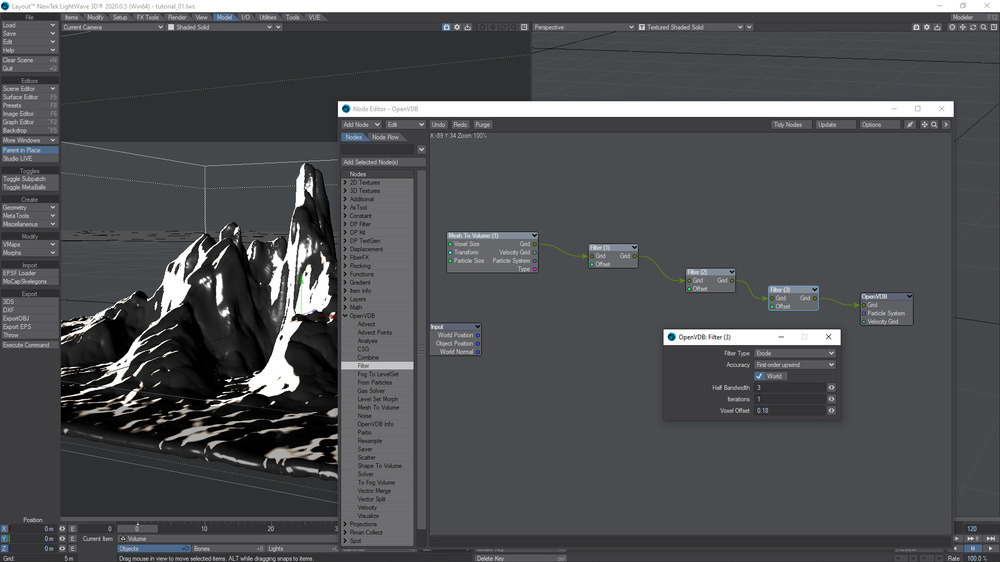

In the OpenVdb Evaluator node graph, select the mesh to volume node to convert the plane geometry to a openvdb SDF (Level set).

Well, something is wrong after we've connected the mesh to volume node to the grid output...

When this kind of thing happens, we have two options: either adjust the voxel size or use the filter node to dilate the vdb and create more mass. In this example I chose the latter.

I decided to smooth the vdb a little bit. For this, I added another filter node and chose laplacian flow to do a smoothing that preserves the volume of the vdb.

We have finished the basic setup. Now we will work on the displacement to do our terrain.

Building the terrain.

Going back to the plane nodal displacement, we have enable subpatch and increase the viewport subdivision levels. For now, make sure to set up a value that will not crash your system. I set up to 16 subdivision levels to viewport and the double for the render.

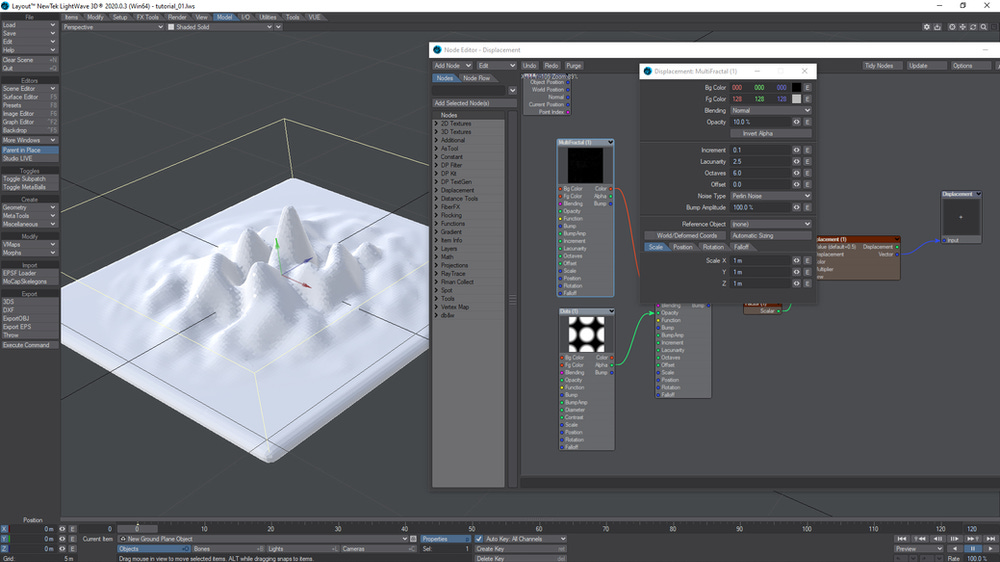

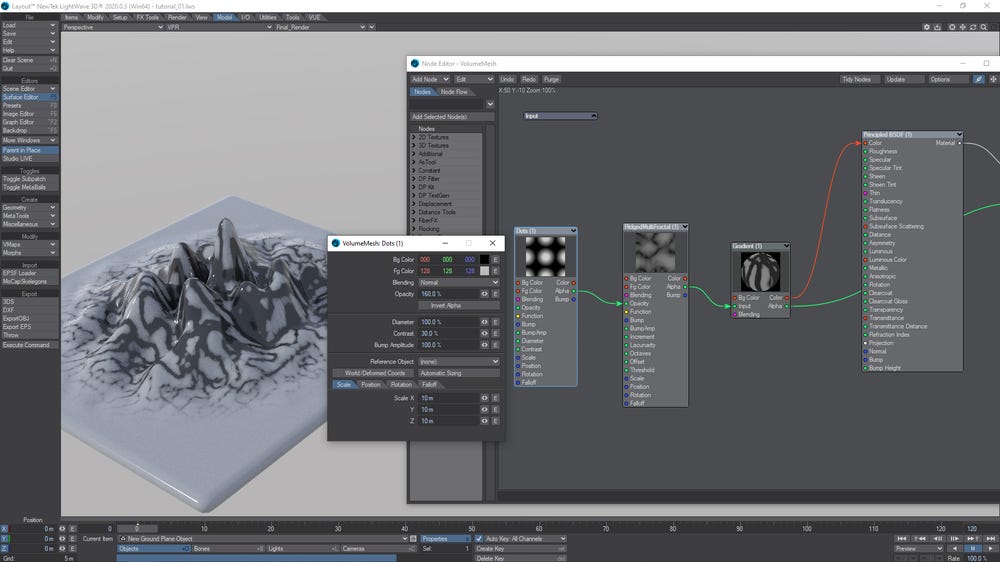

And this is my setup to start. I used a Hetero Terrain node. The other node is our already known displacement compound node.

Note that the displacement affects all plane. I don't want this. What I want is to create a circular falloff and the displacement to be higher at the center. For this, I'll select a Dots procedural node which will spread dots on the whole surface. I only need a big one, at center. We obtain this result by scaling the procedural to the size of the plane (10mX10m). The opacity value controls how high and how intense is the displacement.

To do the texture falloff in Lighwave is very easy because this function is built-in. In the falloff tab, I defined the range (20%) and the type to spherical. I recommend to try others setups: you can obtain very interesting results.

Now, we have our mask. Connect the Alpha output from the dots texture node to the Opacity slot of the Hetero Terrain texture node and Voilà!

A lot can be done here, but we stay simple. You're invited to do your own experimentations.

I'll spread a small displacement on the non-affected area. I used a Multifractal texture node and put it in the background color of the Hetero Terrain node. I also increased the contrast of the dots texture to 20%.

Lookdev.

The first step is to copy the dots texture node. We'll need it for the shading. Next, we have to improve our displacement. I'm looking for a kind of alien landscape, with a crystal like formation. For this, we need to give to our displacement an appearance of rock formation. We'll do this in the shading. The terrain looks coarse, then I'll first smooth the terrain.

I pasted the dots texure in the node editor and took a look. That's our mask. I'll use a RidgedMultiFractal texture to do the additional displacement of the terrain.

I'm going to stretch it on the Y axis. Gradients is the way to do this. The Alpha of the RidgedMultiFractal texture is the reference (input) for the gradient. Again, here I'll do a fast setup, but here we have room for many experimentations.

Configuring the first gradient color:

Configuring the second gradient color:

Stretching...

Fine tuning the mask...

Now, the displacement. We connect the Alpha output from the gradient to the displacement slot in our Displacement compound node; and the displacement output from this node to the displacement slot of the Surface node. I also increased the scale a little bit.

But we can't see this displacement yet, because this is a surface displacement. We must enable it in the object properties.

Now, when we use the VPR, a warning message will pop up. It's bringing attention to the quantity of voxels used in the object and asking if we want to cancel the operation. We'll always answer no. I also reduced the voxel size to have a more detailed SDF. This is not the finished setup yet. This displacement will going to be improved. But, for now, it's ok and we can fine tune our landscape.

I increased the plane subdivision a little bit and added a gradient to have a texture preview of the displacement.

It's time for the light setup. I used a HDRI that I made in Terragen.

And I tried to match as good as possible the position of the infinite light with the sun in the HDRI map.

I also used ACES tonemap. Unfortunately, Newtek didn't implement the proper support for OCIO in Lightwave. Tonemap is not the same. By the way, Terragen supports OCIO and the HDRI file I'm using is in acescg colorspace.

I'll use a transmissive material, then I need to adjust some render settings: I set Ray Recursion Limit and Refraction recursion Limit to 32. In doing so I'll have enough light bounces to render the transmission correctly.

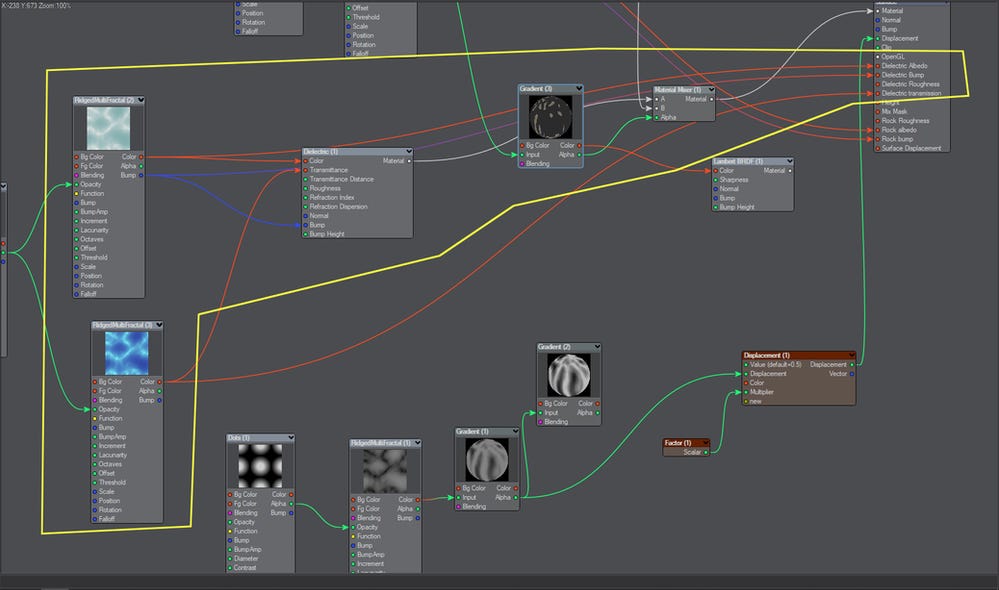

The mountain is going to be a mixture of rock and a kind of crystal. First I'll setup the crystal material. Then, the rock material and mix them.

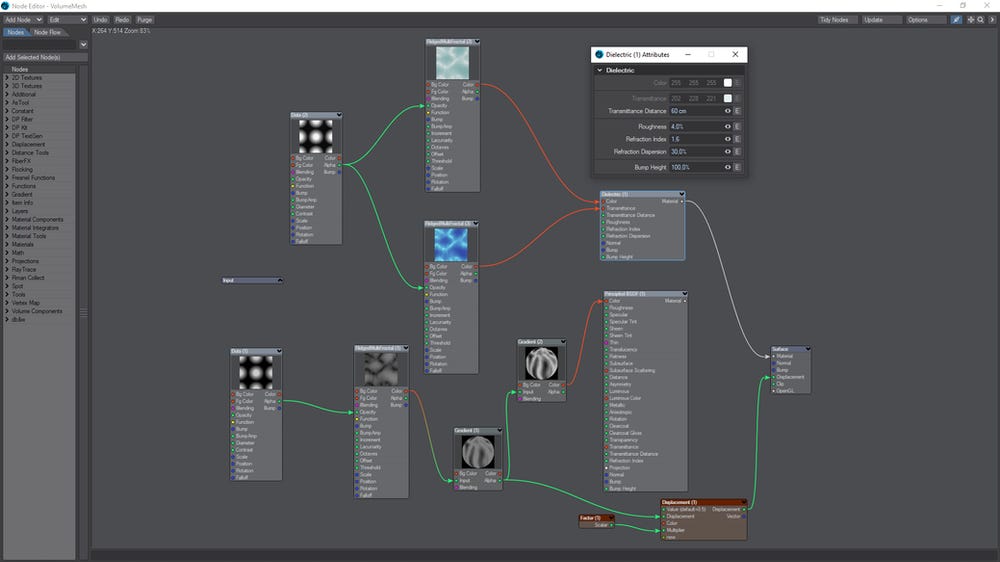

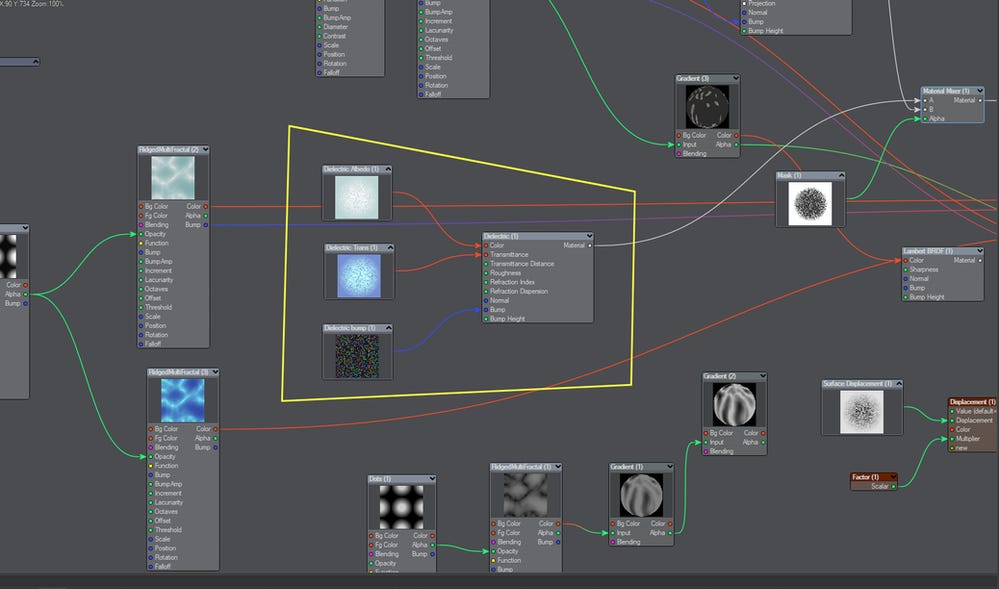

For the crystal material I used a Dielectric material node. The settings can be seen in the following picture.

I copied and pasted the RidgedMultiFractal node and the Dots texture node. The first RidgemultiFractal is going to be the Dielectric Albedo. The second one is going to be the Transmissive Color, and I changed some parameters in the Dots texture.

And this was the result:

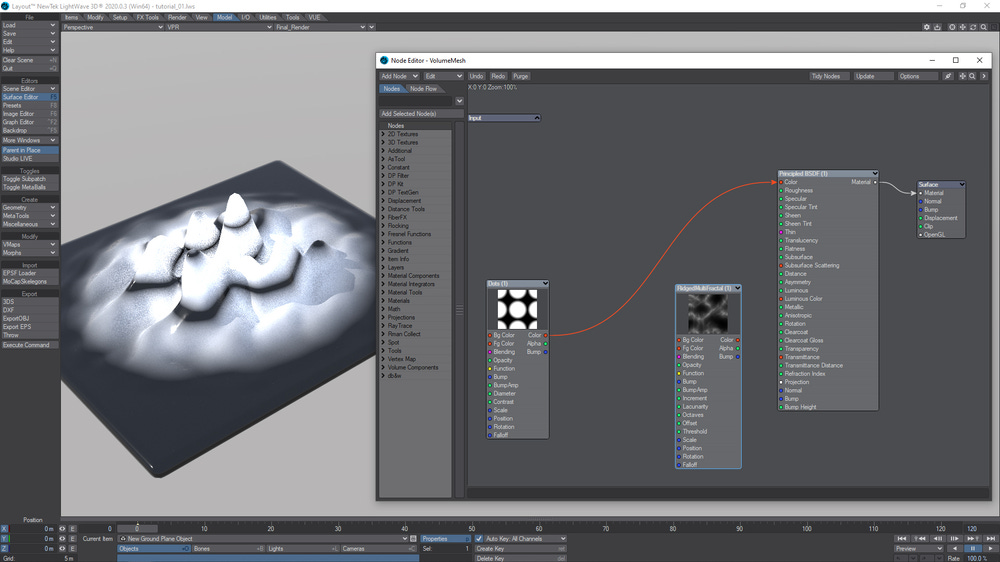

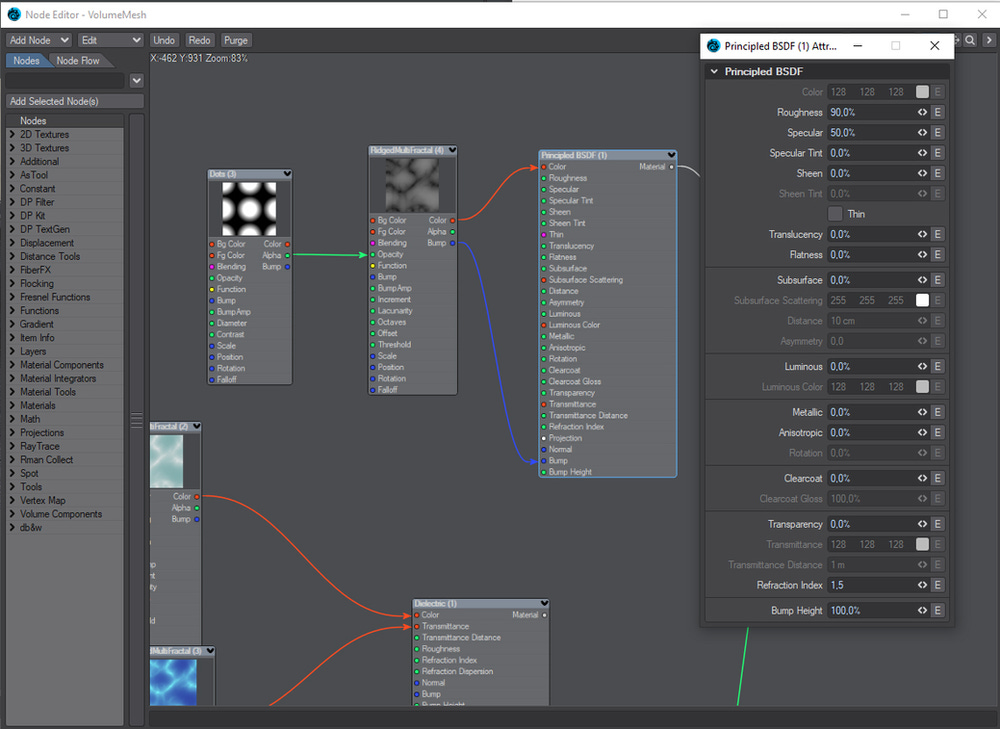

Again, I copied and pasted the Dots texture and the RidgedMultiFractal node to do the rocks shading. I used the Principled Shader and set the roughness to 90%. Suffice for this tutorial. Of course, on production, this shader must be a lot more worked.

For the other nodes I did little changes.

Now we are going to mix the rock-sand material with the Dielectric material. We'll use a material mixer node for this.

We can fine tune the mix of the materials using a gradient node. I'll borrow the alpha of the RidgedMultiFractal node to be the driver (input) of my tweaking. The lambert material is to visualize what I am doing.

This is what we have got up to now:

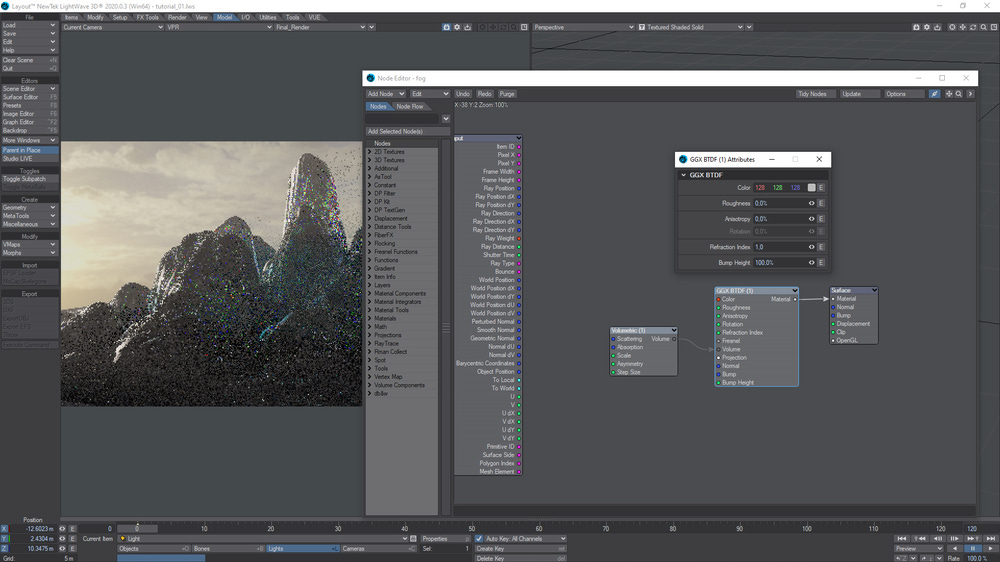

Time to add more feeling to the scene. I added a 10m by 10m box with sufficient height to completely cover the rock formation, and called it fog.

Then, I used the same technique that I showed in my tutorial called How to render volumes faster in Lightwave 3D : in the fog geometry I used a GGX BTDF material with volumetrics.

And it's a good time for a test render. I set my render settings as follows:

When we hit F9, the known warning pop up will shows up. We always answer "no".

Of course, with this settings our test render will be very noise, but it will show us to where we are heading.

Creating custom buffers and baking texures.

Baking the maps will allow us to export the height map to a specific software to do the erosion, for example. Besides, we'll get faster render times using images instead of a procedural network.

The problem is that we cannot bake the textures on the SDF, because it is not possible to have uv's in this type of geometry. One of the solutions would be to save the SDF as a transformed object and, in doing so, freeze the vdb to polygons. But, I don't want to do this. I notice that Lightwave renders the VDB faster then a heavy polygon geometry. Besides, we'll face poblems to unwrap the frozen terrain geometry.

My solution is to use the plane object that drives the SDF displacement to bake the textures. Everything will work because our terrain is only a procedural network up to now. Everything is from the plane being displaced. Therefore, we can use that plane to bake the textures and after, just do a planar projection to use the images on the VDB geometry.

First, we have to send the plane geometry to Modeler to do the uv mapping that would be very simple: only a planar projection using the Y axis. But, before that, I'll disable the plane subdivisions and toggle the subpatch off. I'm doing this because, in Modeler, I'll subdivide the plane to obtain a better looking terrain. Then, I want to avoid any memory overload issues.

In Modeler, I subdivided the plane and did the uv mapping.

And before went back to Layout, I activated Catmull-Clarke subdivision in Modeler. This will change the result for sure, to a better one.

Back in Layout, look that: The mountain is back with subpatch off. The Catmull-Clarke subdivision that we did in Modeler is enough to handle the displacement.

However, we want a lot more detail. I'll play safe with 6 subpatch divisions for now. And we receive the voxels warning again: we already know what he answer is.

Rocky but blobby. First I'll decrease the surface displacement a little bit.

And I'll add anoter filter to the voxel mesh. This time, an erosion. This is a very sensitive node. Play with different values to see the results. I chose 0.16 and this made a great change in the look of our terrain.

And this is the result:

I decided to raised the erosion value to 0.18, and to set the patch sublevel to 64 for the display, and to 96 for the renderer. Higher subdivision of the plane, higher the quality of the VDB mesh. Besides, we still have the option to reduce the voxel size to obtain an even more refined mesh. We have to balance both values.

And I did a test render. Almost 20 millions polys and 36.6 million voxels: Lightwave handles everything perfectly.

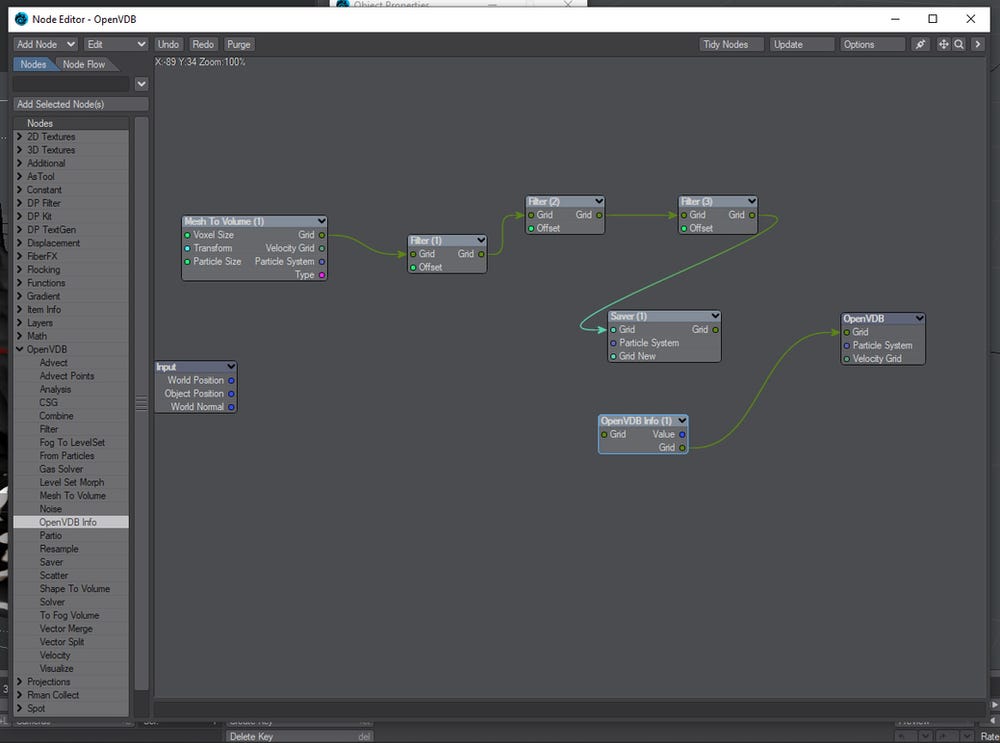

Now that I have the final form of the landscape, It's time to bake the textures. First, I'll save the terrain as a VDB file. In doing so, we get rid of the voxels warnings and render time calculations. I raised the subpatch display to 96 and added a saver node in the openvdb node editor.

The saver node allows to save te vdb in a cache file. After, we'll load the cache using the OpenVDB info node.

For the baking procedure, I'll disable all lights and create a new camera that is going to be a surface baking camera. I'll bake 4K textures.

In the Render Properties window, we select the Buffers tab and in the Edit drop down menu, we select Create custom buffer.

We also have to activate the buffer in the Output tab. This is the buffer for the height map, then I select an EXR float point as file format and color space as linear (Actually, linear it's not a color space, it's a transform function; but this is subject for another time). This is only for VPR. In the time to save the render images, we can choose the file format as desired.

Now, we can see the height map in the VPR when we select the height buffer.

Copy and paste the shading network from the VDB mesh to the plane mesh node editor. Then, do the connections as follows:

And here are the buffers as shown in VPR.

After all has been set, hit F9 to render all buffers.

The Final Render

After baking the textures we have to replace the procedural textures with the baked ones inside the VDB shading network. All textures will be using planar projection on Y axis, and the scale is set using the Automatic Sizing option.

Next, we'll optimize the render settings. I'll enable Limited Region in VPR to have a faster feedback.

I'll set light and volumetric samples to 1 for all lights in the scene.

Then, I'll disable adaptive sampling and test values in minimum samples until I reach to a value that returns a noise free result that is satisfactory.

We divide 5120 samples for the Maximum Samples value to obtain the number of brute force rays to be used. In this case, 640. For the lights, I used the default: 4 samples for each and 2 samples for the volumetric.

Concerning to samples, in this scene, the most important setting is the Refraction samples. I set to 8.

At last, I'll cache the radiosity of the frame. Before I do this, I'll put a lambert material on the terrain: this will make the radiosity be calculated faster than if I did the calculations with all the textures.

For the secondary GI, always use Irradiance Cache. I enabled caustics and Affected by volumetrics. The rest are the default values.

After rendering you can use a denoiser of your preference, but the render result is pretty clean already.

You can also save the custom buffers to use for compositing. Related to depth map, normals map and others alike, you have to use a "real" geometry. For this, I recommend to use a low subdivision level on the plane only to obtain the maps or save transformed the VDB mesh to freeze to polygons. However, there are others tricks that can be used. Using the method shown in this tutorial, for compositing, the artist have to rely on custom buffers.

The final Render:

What's beyond...

The creation of compound nodes is the way to explore VDB's more in Lightwave 3D. Displace a mesh that drives the VDB mesh creation is much more faster than to try to create the displacement directly using the OpenVDB node editor. I imagine experimentations with the procedural textures to create functions that will create masks and finest ways to control the displacement. I think that there are great possibilities here for terrain creation. Lightwave handles VDB very well and the saved VDB file has small size, therefore I see the possibility of building large scale landscapes using this method.

Lastly, maybe it is possible to create a nodal network that emulates an erosion solver. On other way, the VDB file can be loaded in a software like Houdini for further improvements.